Welcome back to our discussion of the computing revolution and why Cyphr is investing in it. If you haven’t read Part 1, click here. For those that have, read on!

In Part 1, I explained how part of our investment thesis around “infrastructural excellence” relates to the development and enhancement of data centers and how they impact the future of AI. In this subsequent part, let’s delve into the two other prongs of our infrastructure investment thesis, including the ways in which dedicated AI chips have been and are going to make it into consumer products, and AI-based intellectual properties (IPs) that will be sold by companies as library components to be integrated within other chips.

These three chip-related technologies are the trifecta in my opinion. We need all three to power the AI revolution we are undergoing.

Dedicated AI Chips and Consumer Products

Why Dedicated AI Chips Will Matter

We are going to see AI chips make their way into a wide range of consumer products due to the growing importance of AI in enhancing user experiences and enabling new functionalities. For example, Apple is reported to be increasing its AI hardware capabilities by adding specialized functions in their chips to handle generative AI tasks.

These specialized processors, designed to efficiently handle AI and machine learning tasks, offer significant benefits over general-purpose processors in terms of performance, power efficiency, and the ability to run complex AI algorithms on-device, without always needing to connect to the cloud. The integration of dedicated AI chips into consumer products will represent a significant shift towards more intelligent, efficient, and personalized technology.

Notably, AI chips will enable devices to operate on prem. This is HUGE. Even if you ask Siri a question right now, it’s sent to the cloud - so many tasks on your phone currently are. By processing AI tasks locally, these devices can offer faster responses (no issues with connectivity and network speed), increased privacy and security, and new functionalities that were previously not possible or required cloud-based processing. Also, if someone in the chain isn’t spending money to run these tasks on the cloud, and they can be done locally, there will be significant cost savings.

Below are several key areas where dedicated AI chips will become integral as it pertains to consumer products:

Smartphones and Tablets

AI chips integrated into smartphones and tablets will revolutionize user experiences by enhancing photography and videography through computational techniques like portrait mode and night mode, enabling real-time video enhancements for smoother output, and facilitating advanced features like super-resolution. They will also improve voice assistants and speech recognition by enabling faster responses and accurate processing of natural language queries without constant internet connectivity. Moreover, AI chips will bolster device security by enabling on-device processing of biometric data, such as facial recognition, ensuring sensitive information stays secure and reducing reliance on external servers for authentication, thereby enhancing overall user privacy and protection.

Yes, most of the above already exists, but these features will get a lot better with the integration of AI chips. What you know as photography today on your iPhone will not look like photography on the iPhone of the future once an AI chip is installed, and it will change in ways we can’t yet predict.

Caveat: This applies to everything else I note below, so keep that in mind. And also, we don’t know what the killer app for dedicated AI chips will be. So far, AI’s killer app has been ChatGPT.

Wearables and Health Devices

AI chips will revolutionize activity and health monitoring by enabling real-time analysis of health metrics like heart rate monitoring, sleep tracking, and detecting health events directly on wearable devices. This advancement allows for immediate feedback, enhancing the effectiveness of health monitoring and management. Moreover, AI chips optimize the processing of sensor data and efficiently manage device functions, significantly extending battery life. This improvement in energy efficiency makes wearables more practical for continuous use, ensuring users can rely on their devices for prolonged periods without worrying about frequent recharging.

Home Automation and Security Cameras

AI chips are poised to transform smart home devices by facilitating the development of intelligent voice-controlled assistants in gadgets such as smart speakers and home hubs. These chips empower devices to process commands locally, minimizing latency and ensuring functionality even in the absence of an internet connection. Furthermore, in security cameras, AI chips play a pivotal role in integrating sophisticated capabilities like real-time motion detection, facial recognition, and object identification directly onto the device itself. This approach enhances security and privacy by processing data locally, reducing the need for constant internet connectivity and potential risks associated with transmitting sensitive information over the network.

Automotive

AI chips are set to revolutionize Advanced Driver-Assistance Systems (ADAS) by providing essential support for real-time processing of crucial features such as object detection, lane keeping, and autonomous driving functionalities. These chips ensure low latency and high reliability, which are paramount for ensuring the safety and efficiency of driving systems. Additionally, in the realm of in-car entertainment and assistance, AI chips play a pivotal role in powering various innovations such as voice-activated controls, gesture recognition, and personalized in-car experiences. By processing these tasks directly on the device, AI chips enhance responsiveness and tailor the driving experience to individual preferences, ultimately elevating overall comfort and convenience for passengers and drivers alike.

Consumer Electronics

AI chips are poised to revolutionize the landscape of smart TVs and streaming devices by amplifying various aspects of user experience. These chips will bolster content recommendations, voice interaction capabilities, and the real-time upscaling of video content to higher resolutions utilizing advanced AI algorithms. This enhancement ensures viewers receive tailored content suggestions and seamless interaction with their devices. Moreover, in the realm of gaming consoles and virtual reality, AI chips are set to significantly elevate gaming experiences. By powering AI-driven game physics, non-player character behavior, and immersive environments, these chips will enrich gameplay, making it more engaging and lifelike. This advancement represents a significant leap forward in the evolution of entertainment technologies, promising users more immersive and personalized experiences across various platforms.

Dedicated AI Chips v. Generic Chips

As AI technology continues to evolve, we may see further differentiation in how AI capabilities are integrated into consumer products. The decision between dedicated domain-specific chips versus general-purpose AI chips complemented by specialized software stacks will largely depend on the specific needs of the product, including performance requirements, cost constraints, and the need for flexibility in supporting multiple AI functionalities. Additionally, advancements in semiconductor technology and AI algorithms will continue to influence these decisions, potentially leading to new architectures and approaches that blend the benefits of both strategies.

Here’s how both approaches are shaping the landscape:

Domain-Specific Dedicated Chips

Highly Optimized Performance: For applications where performance and efficiency in a specific domain are critical, dedicated chips designed for that particular domain can offer significant advantages. These chips are optimized at the hardware level to execute specific types of algorithms or tasks, such as image processing for computational photography in smartphones or real-time motion detection in security cameras.

Energy Efficiency: Domain-specific chips can also be more energy-efficient for their intended task, extending the battery life of portable devices. This is particularly important for wearables and mobile devices.

Cost-Effectiveness for High-Volume Products: In high-volume products where a particular AI functionality is a key selling point, the economies of scale can justify the development and production costs of dedicated chips.

General AI Chips with Specialized Software Stacks

Flexibility and Upgradability: General AI chips, which are designed to handle a wide range of AI tasks, offer flexibility. When paired with a specialized software stack, these chips can be tailored to perform well across different domains. This approach allows manufacturers to update the device's capabilities over time through software updates, extending the product's lifespan and improving its value proposition.

Broader Application Range: Devices that aim to serve multiple AI-powered functions may benefit more from general AI chips. For example, a smartphone uses AI for photography, user interface personalization, voice recognition, and more. A general AI chip can handle all these tasks, with the software stack ensuring optimal performance for each application.

Development and Maintenance Cost: While developing a specialized software stack for different domains can be resource-intensive, the ability to use the same chip across multiple product lines can reduce overall hardware development and production costs.

Hybrid Approaches

Some devices may employ a hybrid approach, incorporating both general-purpose AI processors and domain-specific accelerators within the same system. This allows them to leverage the flexibility of general AI chips for a broad range of tasks while still benefiting from the efficiency and performance of specialized processors for key functions.

Chip Players

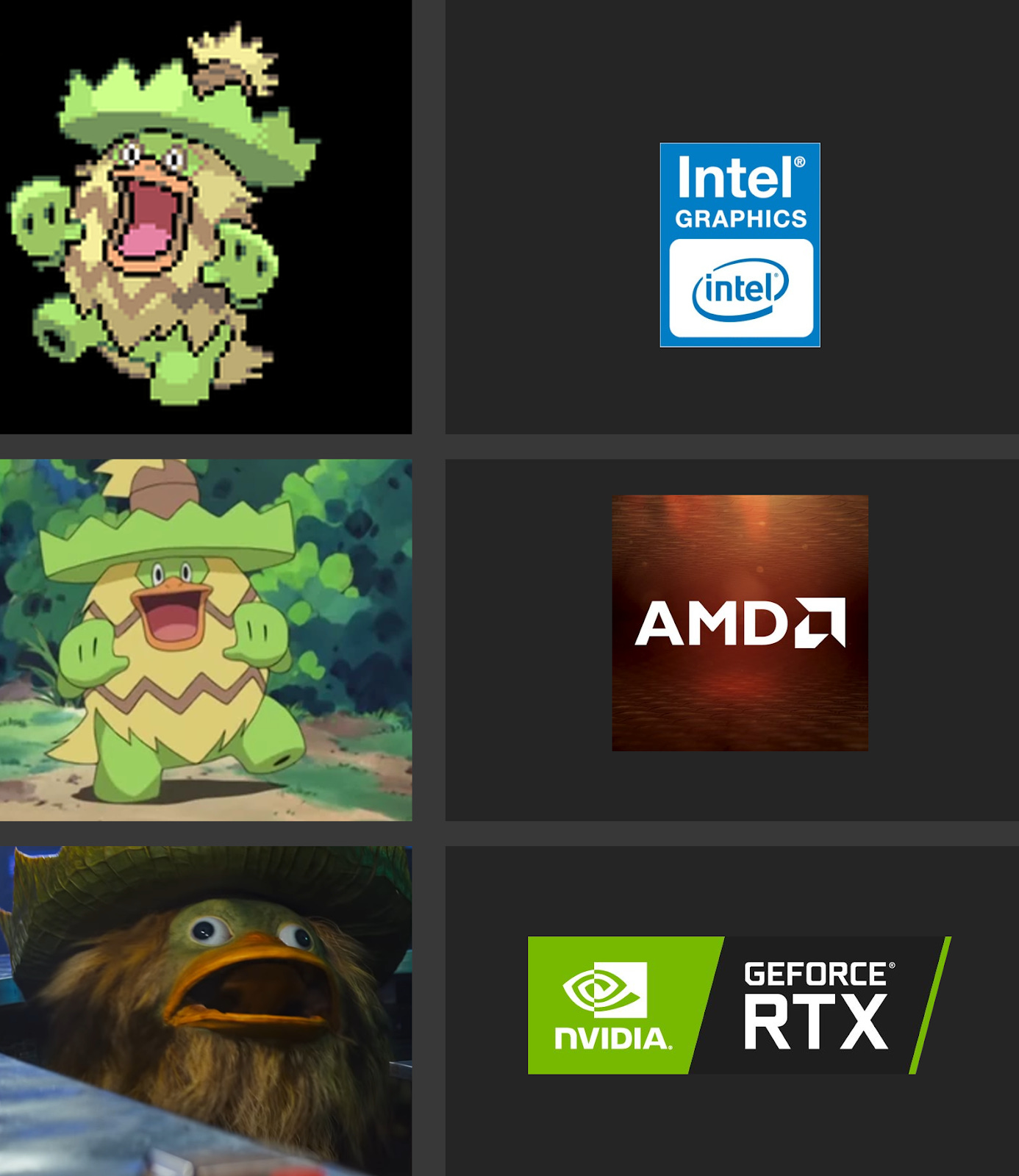

Several major companies are already deeply invested in the development of dedicated AI chips, and this trend is likely to continue in the foreseeable future. Some of the key players in this space include technology giants like NVIDIA, Intel, AMD, Google, and Qualcomm. As has been widely discussed, NVIDIA’s GPUs have been widely adopted for AI tasks and the company has also introduced specialized AI chips like the NVIDIA Tensor Core. Intel and AMD are also actively working on AI chip solutions, with Intel releasing products like the Intel Nervana Neural Network Processor (NNP) and AMD developing AI capabilities in their CPUs and GPUs. Google has developed its Tensor Processing Units (TPUs) for accelerating AI workloads in its data centers, while also investing in AI chip research for edge devices. Qualcomm is focusing on AI chip development for mobile and IoT applications through its Snapdragon platforms.

Intel and AMD are desperately trying to catch up to and challenge NVIDIA’s GPU dominance with their recent strides in developing their respective GPU technologies. NVIDIA has traditionally dominated the market for discrete graphics cards, which turned out to be a far better fit for running AI applications than CPUs (more to come on GPUs v. CPUs next time in my primer). This boon was completely unplanned by NVIDIA. Kind of wild that they’re now the world’s third most valuable company inadvertently.

However, we are also going to see startups and research institutions worldwide working on specialized AI chip designs. In addition, we are going to see startups building generic chips that can be customized to a particular market. People are going to build smaller chips that go into cheap consumer devices.

AI-Based Intellectual Properties

Of the three prongs of Cyphr’s infrastructure investment strategy, this will likely occupy the smallest percentage of the portfolio, but nonetheless, it’s worth an important mention.

First of all, when you saw the initial mention of AI-based IPs in the introduction, you may have wondered what the heck “IPs” are. No worries, I got your back. Let’s break it down into simpler terms. You know what IPs are - you just may not know that you know. :)

I’ll give you an example of the most famous IP in the world: ARM. ARM’s revenues are about $2.9B per year. They build software-based processors that can be configured for many different applications. In other words, other companies can take the ARM code, modify it, and put it into their own chips. EVERYONE uses ARM processors - all Android and iPhones run on ARM processors, for example.

So in short, IPs are software/code that you can put into a chip - you can buy it as a software and eventually it becomes part of your hardware. There’s also hardware IP that you can directly drop into a chip. A simple example of that are USBs - they are IPs that need a hardware to plug into in order to function. There’s a market for software and hardware IPs for AI.

AI-based IPs specifically are like ready-made building blocks or tools that use AI technology. They’re created by companies and can be sold to other companies who want to use AI as library components integrated within their chip products. These IPs can do various things, like specific tasks or even bigger AI functions. They're designed to be easily integrated into other products, like computer chips, without the need to start from scratch in developing AI technology. These AI IPs can range from specific algorithmic functions to more comprehensive AI processing units, offering flexibility and enhanced capabilities across various applications. So, instead of reinventing the wheel, companies can use these AI-based IPs to add smart features to their own products more quickly and efficiently.

The beauty of these IPs is that they’re relatively inexpensive to produce. Sure, you still need to work with a chip designer to make it, but you don’t need a fab or anything particularly complex.

By offering these AI-based IPs as ready-to-integrate components, companies enable device manufacturers to incorporate advanced AI capabilities into their products more easily and cost-effectively. This approach accelerates the development of AI-enabled devices across various sectors, including consumer electronics, automotive, healthcare, and IoT, by providing a modular, scalable way to enhance products with intelligent functionalities.

Here are some of the functions associated with these AI IPs:

Neural Network Accelerators

Convolutional Neural Network (CNN) Accelerators: Optimized for tasks involving image and video processing, such as facial recognition, object detection, and augmented reality (AR) applications.

Recurrent Neural Network (RNN) and Transformer Accelerators: Suited for processing sequential data, making them ideal for natural language processing (NLP), speech recognition, and time-series analysis.

Vision Processing Units (VPUs)

Image Signal Processing (ISP): Advanced image processing capabilities, including noise reduction, color enhancement, and dynamic range adjustment, critical for cameras and imaging devices.

Computer Vision: Algorithms for real-time object tracking, depth estimation, and gesture recognition, supporting applications in security cameras, drones, and automotive driver assistance systems.

Audio Processing Units

Voice Activation and Recognition: Enabling voice-controlled interfaces, these IPs can process and recognize spoken commands efficiently, even in noisy environments.

Sound Localization and Beamforming: Technologies for enhancing the clarity of audio capture in devices like smart speakers, enabling them to determine the direction of sound sources or focus audio pickup in specific directions.

Natural Language Processing Units

Language Understanding: Capabilities for parsing and understanding human language, supporting applications in text analysis, automated translation, and content recommendation.

Speech Synthesis: Generating human-like speech from text, used in virtual assistants, accessibility tools, and interactive entertainment.

Security and Encryption

Secure Enclaves: Dedicated processing units for handling sensitive information and cryptographic operations, ensuring data security and privacy in transactions and communications.

Anomaly Detection: AI algorithms designed to identify unusual patterns or behaviors indicative of security threats, fraud, or system failures.

Energy Management

Power Optimization: AI-driven power management IPs that dynamically adjust power usage based on workload demands, improving energy efficiency in mobile devices and wearables.

Sensor Fusion and Interpretation

Context Awareness: Combining data from multiple sensors to interpret the device's environment or the user's activity, supporting features like activity recognition and indoor navigation.

Call to Action

As mentioned in part 1, if you are a startup solving any of the above problems, we’d love to hear from you. We are investing in the infrastructural systems needed for the Intelligence Renaissance and would love to be your first check.